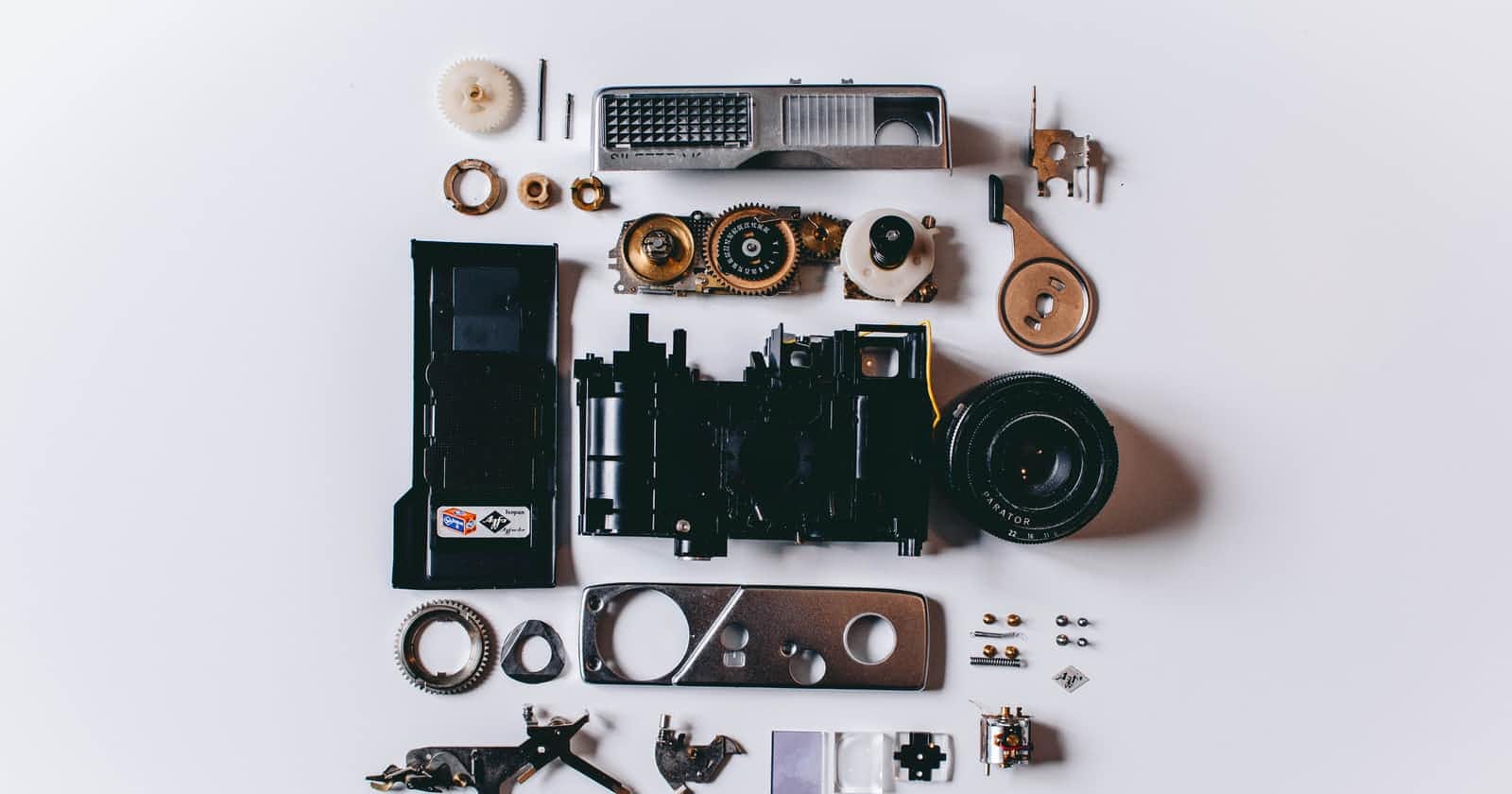

Photo by Shane Aldendorff on Unsplash

Outreachy BW3: What's all this about?

A 2000+ words project update with how it started and how it's going.

Hello there!!

Clearly, since you've made it here, you have heard of Wikipedia. If you haven't (and I cannot believe this), then well, it's literally in the name. The term "Wikipedia" is a combination of "wiki," meaning "fast" in Hawaiian, and "encyclopedia." It is a free, multilingual online reservoir that covers all areas of knowledge and is authored and updated by a community of volunteers through open collaborations. Supported by the Wikimedia Foundation, the website has been here since the first year of the 21st century.

For someone like me, who grew up with little to no internet access until college, Wikipedia was (and is) the equivalent word for the internet. The idea has been ingrained so deeply into my memories that even after years as a CS grad, Wikipedia is the first thing I visualize while talking about the intricacies of the world wide web. Despite its barebone appearance in an era of JS-powered interactive and lush websites, Wikipedia contains an unthinkable amount of interconnected and indexed collections of human knowledge, just clicks away from anyone on the internet. As of July 11, 2022, the English Wikipedia alone contains 6,537,018 articles (and averages 586 new articles per day!!) with over 90 times as many words as the 120-volume English-language Encyclopedia Britannica. The sheer capacity of this website makes it a go-to resource for almost anyone who wants to know things and has an internet connection. And it is certainly the most convenient resource for researchers working with languages. In particular, with the emergence of Natural Language Processing (NLP), the utility of the structured and vast resources from Wikipedia has gained even more attention.

NLP refers to the subfield of Artificial Intelligence that enables computers to comprehend human language. To achieve that goal, machines need to be fed a large amount of good-quality textual data from a plethora of domains. Data possessing these traits is very hard to come across in the wild. They may be behind pay-walls, or they may not be structured enough for collection. Their metadata may be unreliable. They might lack any variations present in natural language, which is very important for training ubiquitious NLP systems. Or they might simply not be large enough in quantity to teach a learning system. In these cases, Wikipedia articles become the first place of refuge for the NLP enthusiasts.

Well then, what seems to be the problem?

So here's the thing. Despite Wikipedia's inherent detail-orientedness and effort on ubiquitous access, the Wikipedia literacy rate is pretty low. The wiki-jargon is a handful!! In fact, prior to my participation in my intern project, I had no idea about terminologies like wikitext, parsoid, talk page, transclusions, etc. Half of my intern period is going by, yet I am still positively perplexed about many of these.

When NLP researchers want to access the data in Wikipedia, they go for the Wikipedia Data Dump, which refers to a snapshot of all the various contents present in Wikipedia at a given time. These dumps are neither complete nor consistent, and significantly unwieldy in size. They store article information and metadata in different database formats like JSON, XML, SQL, etc. Now, Wikipedia is based on MediaWiki, a PHP-based content management system that keeps all material in a backend database formatted using a specific markup language called Wikitext. The same compacted markup language is also used in the official dumps. To collect data from these dumps, hence, requires the user to understand and manipulate Wikitext syntaxes which can be quite a large headache for someone just looking to get some text for their model training.

How do you find these dumps?

All the Wikimedia wikis for over 300+ languages are listed on this page. As mentioned in the previous section, these wikis are grouped according to some namespaces. Right now, Wikipedia maintains 30 different namespaces that allow the separation of various contents. The dump files contain the contents of these namespaces for a specific project (e.g., Wikipedia, wikisource, wikibooks, etc.), time duration, and language. So overall, as we were discussing previously data collection from Wikipedia is not very easy because there are a lot of bells and whistles to manage.

Fortunately for us, recently, the Wikimedia Enterprise has released their Enterprise HTML dump curated by parsing the Wikitext of articles. Processing these dumps are the main target of our project.

What is the catch?!

When a user downloads directly the wikipedia dump files, s/he has to go through the tedious and time-consuming process of extracting their intended information from the wikitext data of these compressed dumps. But, using the internal MediaWiki APIs for this task is not very ideal, because it is computationally expensive at large scale and discouraged for large projects. Several tools, like WikiExtractor and mwparserfromhell are available to extract data directly from wikitext by expanding modules and templates internally. The issue with such wikitext parsing is that it is not nearly perfect enough. Large discrepancies are present in the To know more about this you can look into this paper from Mitrevski et al where they perform in-depth empirical analysis on the issues related to Wikitext parsing. In summary, such information degradation is a significant obstacle for the research community looking to use wikidumps for their NLP training tasks.

Then what can be done about this?!

When rendering contents, MediaWiki converts wikitext to HTML, allowing for the expansion of macros to include more material. The HTML version of a Wikipedia page generally has more information than the original source wikitext . So, it's reasonable that academics who want to look at Wikipedia through the eyes of its users would prefer to work with HTML rather than wikitext. This is where our project comes into being. In recent weeks, the Wikimedia Enterprise HTML Dumps have been made available for public usage with regular monthly updates so that researchers may use them in their work. Developed for high-volume reusers of wiki content, these dump files are created for a certain set of namespaces and wikis. Each dump output file is a tar.gz archive containing one file. When uncompressed and untarred, the file has a single line per article in JSON format. Major attributes of these dump files can be found listed here.

You can download the 6 most updated Enterprise HTML dumps from this location. You can also directly access them from the PAWS Server hosted by WikiMedia, which is essentially a Jupyter Notebook with shell supports. In the PAWS server, the dumps are found in the "/public/dumps/public/other/enterprise_html/runs" directory. To load a specific dump file from the server, you have to maintain the following naming pattern:

language code><project>"-NS"namespace id>"-"date>"-ENTERPRISE-HTML.json

Here's a snippet of how I loaded and red a portion of a large dump file using the PAWS server :

lang = "en" # language code for ENGLISH

project_name = "wiki" # wikipedia namespace

namespace = 0 # 0 for Main/ Articles

date = "20220420"

DUMP_DIR = "/public/dumps/public/other/enterprise_html/runs" # directory on PAWS server that holds Wikimedia dumps

HTML_DUMP_FN = os.path.join(

DUMP_DIR,

date,

f"{lang+project_name}-NS{namespace}-{date}-ENTERPRISE-HTML.json.tar.gz",

) # final file path

print(

f"Reading {HTML_DUMP_FN} of size {os.path.getsize(HTML_DUMP_FN)/(1024*1024*1024)} GB"

)

article_list = []

with tarfile.open(HTML_DUMP_FN, mode="r:gz") as tar:

html_fn = tar.next()

print(

f"We will be working with {html_fn.name} ({html_fn.size / 1000000000:0.3f} GB)."

)

# extract the first article from the first tar chunk

with tar.extractfile(html_fn) as fin:

for line in fin:

article = json.loads(line)

break

.

The introduction of HTML dumps somewhat mitigates the drawbacks of utilising wikitext for corpus development and other research. Researchers can now use HTML dumps (and they should). However, parsing HTML to extract the necessary information is not a simple process. An inconspicuous user may know how to work with HTMLs but they might not be used to the specific format of the dump files. Also the wikitext translated to HTMLs by the MediaWiki API have many different edge-cases and requires heavy investigation of the documentation to get a grasp of the structure. Identifying the features from this HTML is no trivial task! Because of all these hassles, it is likely that individuals would continue working with wikitext as there are already ready-to-use parsers for it and consequently face the misrepresentation of information. This is why we wish to provide an accessible HTML parser to decrease the technological barrier, so that more users may utilise this resource. Therefore, "the aim of this project is to write a Python library that provides an interface to work with html dumps and extract the most relevant features from an article." The library will be integrated into the existing set of tools to work with Wikimedia resources as part of the mediawiki-utilities.

How are we doing this?

A Wikipedia article is a complexly structured piece of content. It has several features to be extracted, like Section data, Headings, internal Links, External Links, Templates, Categories, Media, References, Plaintext data, Formatting, and Tables, etc. Each of these has additional subtypes and attributes, as mostly defined in this specification sheet. As the documentation does not cover all the cases, identifying the markers for these constituting components requires rigorous observation and testing at every stage. Each week my mentors and I sit down to take a crack at a couple of the element at a time. We do our best to establish some conventions and identify the non-apparent patterns. Several things need to be kept track of while creating this project, starting from the existing projects for other data formats to the various library dependencies (like Beautifulsoup) we are using.

The library is currently under development in this repo.

- For starters, we have made a list of all the potential elements we want to extract and their corresponding properties. To do this, we take help from the conventions used in existing wikitext parser libraries like mwparserfromhell.

- We use a test-driven iterative process in developing the library. Separate modules are written for dump processing and parsing.

- As dump files are significantly larger than average memory capacity of user-end machines, we have to process them in chunks. Dump members are converted to instances of an

Articleclass. - In the Parsing module, we create the class definitions of the various elements we have listed earlier and their corresponding extraction methods.

- The whole development process is being extensively documented at each stage, so we can always trace back our coding motivations and also, have a more vivid picture for future developers to understand the project trajectory.

Does this mean that we have it all figured out now?!

In this week's blog, I wanted to give an idea of where I started - a complete beginner with no idea about Mediawiki or the related tools and the associated issues. Since the contribution phase of the internship, I have gradually invested myself in understanding some of these topics and right now I am at a stage, where I feel confident enough to start building a tool for this niche ecosystem (of course with the incessant support from my amazing mentors!!). There is still such a long way to go for this project and we have only dipped our toes in the first pool of functionality. For the moment, we are yet to extract several of the minor elements, like tables and infoboxes. At the same time, we still haven't figured out how to extract continuous stream of noiseless plain-text from the various components of an article. After all those features are implemented, we also have to create better tutorials and documentations to make the user transition from wikitext to HTML a smoother experience. I would definitely love to wrap all of these up within my intern period, but that would be a significantly tough challenge for me. But,this has been one of the most exciting tasks for me in a while, so who knows, might just pull it off completely in the next few weeks too!!

Happy Contributing everyone!

#WomenWhoTech #Outreachy #Wikimedia